Updated April 28, 2026

Augmented reality delivers critical information directly into training, procedures, and patient care, without forcing clinicians to look away. It works when it fits real clinical workflows, and falls apart when it doesn’t.

Augmented reality doesn’t replace what clinicians already do. It changes where they look.

Instead of checking a second screen, flipping through imaging, or relying on memory mid-procedure, the information shows up directly in front of them. On the patient, the device, or in context.

Looking for a Software Development agency?

Compare our list of top Software Development companies near you

Most clinical environments are short on attention.

Between staffing gaps, time pressure, and increasingly complex cases, even small context switches add friction.

AR reduces some of that, not by adding more data, but by putting it exactly where it’s needed.

It’s not perfect. But it’s starting to hold up in real settings. Let’s look at how.

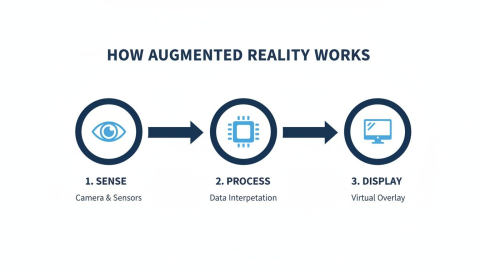

AR systems work by mapping the physical environment, tracking movement in real time, and placing digital models so they stay fixed to what you’re working on.

The system needs millimeter-level accuracy in clinical use. Even a small lag or drift breaks trust and limits usefulness.

The setup changes depending on where it’s being used.

Most teams don’t standardize on one approach. They use whatever fits the workflow.

What actually makes these systems useful is the data they pull in.

That also puts pressure on the underlying systems. Real-time rendering, stable visuals, and responsiveness all depend on how well the hardware handles load.

Sixin Zhou, Marketing Manager at LDShop, works with performance-focused hardware environments where rendering stability and responsiveness directly affect user experience.

Zhou says, “When systems are processing visual data in real time, even small delays or inconsistencies become noticeable immediately. Stability isn’t just about performance benchmarks; it’s about whether the user can trust what they’re seeing. That’s where hardware optimization starts to matter.”

Imaging scans, patient records, and procedural steps can all be brought together and displayed at the point of care.

Instead of switching between screens or relying on memory, the information is available where decisions are being made.

Some platforms are already cleared for specific clinical use, like pre-surgical visualization tools, while others are still being tested and refined.

AI helps interpret the data. VR is used for simulation. AR is what brings that information into the working environment, where it can be used in real time.

This is where adoption tends to stick.

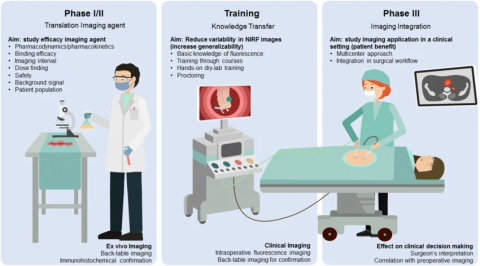

Traditional training has a gap. Students learn from 2D diagrams, then have to mentally translate that into a 3D patient later. It works, but it’s inefficient.

AR removes that translation step.

Students can walk around the anatomy. Break it apart. Put it back together. Repeat procedures without risk.

Residents aren’t just memorizing structures anymore; they’re building spatial understanding that carries into the OR.

That muscle memory shows up early.

This gap shows up when you compare passive study with spatial learning. AR lets learners engage with information in a real-world context.

Kashif Ali, Growth Specialist at PsychologySchoolGuide.net, focuses on how learning environments shape skill retention in technical fields.

Ali says, “What improves retention isn’t just repetition, it’s context. When learners can interact with information in ways that mirror real-world conditions, they don’t have to translate it later. That reduces cognitive load and helps skills transfer more naturally into practice.”

AR doesn’t make someone a better surgeon overnight.

But it does reduce uncertainty at key moments.

That shared view matters.

And time in the OR isn’t abstract. It’s measurable. Roughly $36–$37 per minute when you factor in staff and overhead.

So even shaving a few minutes off setup or execution adds up quickly.

Less fatigue, too. Which doesn’t get talked about enough.

Outside the OR, the same principle applies. Vein visualization. Ultrasound alignment. Bedside procedures where precision matters but margins are tight.

This part gets overlooked.

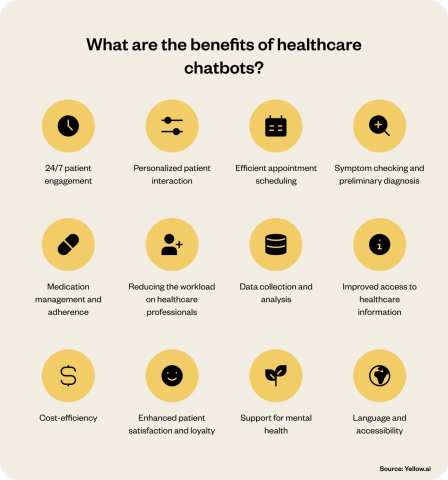

Most patients struggle with understanding information.

Handing someone a report or showing them a flat scan rarely changes behavior. But when you place a 3D model in front of them, something they can point at, question, and interact with, the conversation shifts.

It’s just like a chatbot helps when you need to ask a quick question, except that AR is much more sophisticated and versatile.

Patients engage more.

They remember more.

They follow through more often.

That kind of clarity matters even more in treatment areas where patients are making ongoing decisions about their care.

In hormone therapy, for example, outcomes depend on factors like dosage, timing, and individual response. In treatments such as testosterone replacement therapy, clinicians typically tailor care over time, using lab results and patient feedback to refine dosing and support consistent progress.

You can see this in rehab settings. AR turns repetitive exercises into something interactive. Patients stick with it longer. Form improves faster because feedback is immediate.

Consistency improves, which improves outcomes.

Not everything improves equally.

Some things stand out:

The cost argument usually comes down to time.

If procedures run smoother and prep steps shrink, the savings can offset the tech. But only if it’s used consistently.

It’s rarely the technology. It’s everything around it.

When you look at rollouts across dozens of hospitals, the pattern is predictable. Teams underestimate infrastructure, training, and workflow alignment.

And compliance doesn’t go away just because the interface changes. Patient data in AR is still protected. If the system influences decisions, regulatory frameworks apply.

This is where projects stall, when AR isn’t introduced in a way people could realistically adopt.

The teams that succeed start small. One use case. One department. Clear metrics.

Then expand.

Hardware will improve. It always does.

Lighter headsets. Better tracking. More stable overlays.

But the bigger shift is integration.

AI models identifying risks or segmenting anatomy. AR displays that insight instantly, without forcing a context switch. Remote specialists are annotating live procedures in real time.

That’s where things start to compound.

Patient-facing use will grow, too. Guided care at home. Medication instructions that are actually followed.

The teams that get value out of AR don’t treat it as a broad transformation. They focus.

Not everything needs AR.

But where it fits, it sticks.