Updated April 28, 2026

As AI reshapes how software is built, many SaaS products are reaching the market faster but breaking sooner. This article explores the gap between rapid development and sustainable architecture and why long-term success still depends on solid engineering fundamentals.

Do you recall the early days of your development career? Working on a new project, pushing code that made the product visibly better. You launched and landed your first crucial users and felt thrilled to see your idea come to life.

But lately, that feeling is gone. A simple feature request takes too long to discuss and often leads nowhere. Your team is working hard; you can see it, but the product itself feels stuck.

Looking for a Software Development agency?

Compare our list of top Software Development companies near you

If this resonates, it’s probably not your team’s fault or your business idea.

There's a name for the invisible anchor dragging you down. We call it "Vibe Coding." As Chris Gregori mentioned in his article, Code is cheap now. Software Isn't: "What are people actually building with these tools? If you look around, the answer is almost everything. In fact, we’ve reached a point of saturation.”

Vibe coding is built for short-term velocity. It’s like building a fast, beautiful race car designed to prove you can win. For a proof of concept, it’s often the right approach. But when it comes to scaling a product, you’re no longer trying to win a single race.

So, how do you diagnose an engine you can't see?

Our company has a process called an Impact Week. A comprehensive, no-obligation technical inspection for your software. Lately, our garage has been filled with the same kind of challenges: fast-made projects that are now stalling, built on pure "vibes," and the patterns are becoming clear.

Let’s walk through a recent inspection our team did for a promising SaaS company. They had a great idea, a good product, real customers, and a smart team. But their growth had completely collapsed, and their platform had become their biggest bottleneck.

You’re running a SaaS company where your entire business revenue, reputation, and growth depend on one thing: the software. If it’s slow, buggy, or too fragile to update, you're liable.

It’s a failure you can’t afford. You’ve created demand; customers are ready to pay, but you can’t deliver because the product itself can’t support them.

The entire application, over 13,000 lines of code, was in a single, massive file. In technical terms, it’s a “monolith.”

A monolithic architecture isn't inherently bad. When built intentionally, a modular monolith is a smart, simplified choice for a growing startup.

But this wasn't the case; we found a single file trying to do everything at once. It was handling how the app looks (UI), how it thinks (business logic), and how it remembers data (database calls).

There’s one guarantee with projects like this: they don’t scale. You can’t assign multiple developers to the same areas, as they'll end up overwriting each other and slowing progress.

Innovation stalls, pivots become difficult, and the team struggles to serve customers because the system itself works against them.

According to the 2025 State of AI in Business Report from MIT researchers, only 5% of custom AI pilots successfully scale to production. Because they are treated as rapid demos rather than engineered systems.

Besides code organization, the problem stemmed from the choice of technologies that prevented the platform from scaling.

This highlights the risk of unsupervised vibe coding. When a non-technical user asks AI to “build a data app,” it isn’t considering scalability, long-term maintenance, or concurrent users; it simply generates the most direct response to the prompt.

Without architectural guidance, the AI defaulted to a low-code framework optimized for rapid prototyping, great for demos but not built to support a real product with hundreds of active users.

Technically, the framework relied heavily on memory sessions, meaning the server had to store each user’s data in RAM at all times. This approach is highly inefficient and quickly overwhelms system resources. As a result, the platform couldn’t support the growth the sales team was generating.

This brings us to a distinction that every non-technical founder needs to understand: coding is not architecture.

As software design expert Martin Fowler explains, “poor architecture leads to 'cruft' elements that make systems harder to understand and modify. The result is slower feature delivery and more defects over time.”

Architectural best practices will only grow in importance. Mastering these principles will provide an advantage to efficiently build scalable products and the power to integrate AI tools into your workflow flawlessly.

Even setting aside the lack of architecture and the fact that everything lived in a single file with thousands of lines, the most alarming issues were related to security and safety.

To use the car analogy, the vehicle had no locks, no seatbelts, and no airbags.

In the rush to make it work, the AI-driven, unsupervised process took dangerous shortcuts in two critical areas: secrets management and test coverage.

A secret manager is a tool or service that securely stores, retrieves, and manages sensitive information like API keys, passwords, database credentials, and certificates. It drastically reduces the risk of breaches caused by leaks or human error. Without a strong strategy, sensitive data often ends up in code repos or config files.

In this project, we found sensitive "keys," including API secrets and database credentials, exposed directly in the code. An API key is essentially a credit card number for your server, allowing access to paid services and sensitive data.

When these keys are hardcoded, anyone with access to your code can gain unrestricted access to critical systems. It’s the digital equivalent of leaving the keys to your company vault taped to the front door.

This is a growing risk amplified by AI. According to the State of Secrets Sprawl 2025 report, nearly 24 million secrets were exposed in public repositories in a single year, a 25% increase from 2024. More concerning, the report found that using AI coding assistants increased the number of secret leaks by 40%.

AI prioritizes speed, not security. Without proper oversight, it can leave critical vulnerabilities exposed.

Another unexpected discovery we found was that the project had less than 5% test coverage.

Test coverage measures how much of your software is verified by automated checks. These tests run whenever changes are made, ensuring new features don’t break existing functionality. They can cover everything from basic scenarios to edge cases, integrations, and full user flows.

Think of it like a safety check: adding a new feature shouldn’t break something critical, like installing a new radio shouldn’t cut the brake lines.

With less than 5% test coverage, every bug fix or new feature becomes a gamble. In a codebase like this, sprawling and confined to a single file, developers have no reliable way to know if a change breaks something else until customers report it.

The cost of poor test coverage is high. According to the 2025 Quality Transformation Report by Tricentis, 81% of companies lose between $500,000 and $5 million annually due to poor software quality, staff churn, and technical debt.

Skipping tests to move fast doesn’t save time; it defers the cost to a much more expensive future.

Beyond poor code quality, the hidden costs were even greater. A single data breach from exposed keys or a week of downtime caused by an undetected bug wouldn’t just cost money; it would weaken customer trust.

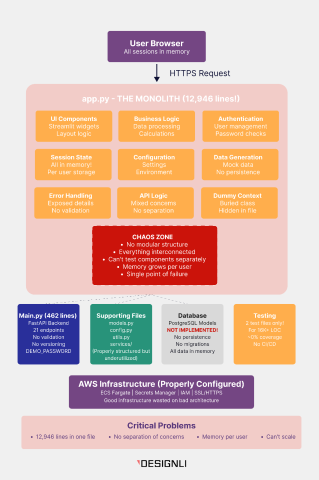

Take a look at the diagram below. This is the actual architectural blueprint of the project mentioned above.

Designli, case study: a scanner of a vibe-coded project (2026).

This image clearly illustrates the “chaos zone.” At the center is a 13,000-line monolith attempting to handle everything at once, with little to no testing in place. Notably, the AWS infrastructure beneath it was actually well configured.

The irony? They built a Formula 1 garage (AWS) to house a duct-taped go-kart.

So how do you fix it?

When founders see this, the first instinct is often, "Can't we just clean up the code? Maybe split the file?”

The hard truth is no.

You can’t turn a go-kart into a production system by polishing it. At some point, you have to stop patching and start re-engineering.

Here is the roadmap we proposed to turn this liability into an asset:

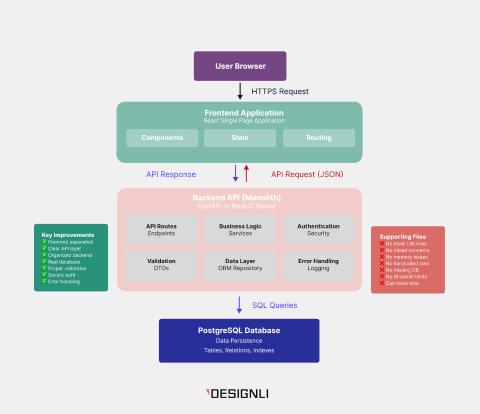

Designli, case study: architecture of a vibe-coded project (2026).

When we presented this to the client, we didn’t propose a patch; we proposed a strategic rebuild using a modern, modular architecture. Untangling a 13,000-line monolith would be a sunk cost. The most efficient path to scalability was to rebuild the foundation correctly.

Here is the exact roadmap we proposed:

First, we replaced the non-scalable interface with React, enabling a component-based front end and solving the scalability issue. This shift allows the application to run in the user’s browser, reducing server load and preventing crashes as usage grows. It also creates the foundation for a more polished, responsive, and professional user experience.

For the backend (the engine), we presented the client with two distinct paths.

We strongly recommend → Option B.

NestJS is an “opinionated” framework; it enforces a clear structure with controllers, services, and modules, preventing the formation of messy monoliths. Think of it as moving from a team working from memory to one following a precise system. As new developers join, the codebase stays consistent, organized, and scalable by design.

For the "no migrations" issue, we proposed implementing PostgreSQL with strict ORM (Object-Relational Mapping) models and version-controlled migrations.

This is your insurance policy. Migrations track every database change and make it reversible, protecting you from data loss during deployments. You move from a fragile prototype to a reliable, high-performance system built for predictability.

There’s a moment every founder faces when seeing a plan like this: Rewriting? That sounds expensive. My AI tool built this in days for almost nothing.

The reality is, you didn’t pay up front; you paid in technical debt. And you’re paying for it every day, whether you see it or not. It shows up as slower development, increased security risk, and the inability to ship new features quickly.

While the 13,000-line monolith we reviewed is an extreme case, it’s far from unique. We’re seeing a growing wave of “vibe coded" projects that look promising on the surface but fail under basic engineering scrutiny. Whether it’s weak architecture, insecure infrastructure, or unstable performance, the fundamentals are often missing.

This isn’t about code purity; it’s about business continuity. Your prototype did its job: it validated your idea quickly and cost-effectively. But relying on it to support a growing product is a risk.

A rewrite becomes part of the next stage. It shifts you from a temporary setup to a scalable foundation built for real usage. The vibe-coding phase is over. Now the engineering phase begins.

Stepping into software development in 2026 means navigating more options than ever. AI tools have reached a level where they are no longer just assistants; they’re seen as viable ways to build. But the fundamentals haven’t changed: architecture and scalability remain essential for long-term success.

Vibe coding is a powerful way to explore ideas and validate concepts quickly. However, pairing it with experienced engineering guidance is what turns potential into a sustainable product.

Building your own ecosystem is exciting, but it starts with one critical step: planning for the long game.