Updated September 17, 2025

The AI revolution is no longer a distant promise - it is happening now. According to Bloomberg, generative AI will become a $1.3 trillion market by 2032. While many companies are actively embracing custom LLM software development, there are particular obstacles that hinder AI/LLM adoption.

In this article, we explore common AI adoption challenges, discuss the technical side of the question, and provide tips and best practices that will help you overcome these problems and build tailored AI-powered solutions.

AI holds immense promise, such as efficiency gains, innovative features, and a competitive edge. Unfortunately, the path to realizing those benefits is rarely smooth. Over the years, working with clients and refining our own processes at Leobit, we’ve identified a handful of recurring challenges that trip up companies at every stage of adoption.

Looking for a Artificial Intelligence agency?

Compare our list of top Artificial Intelligence companies near you

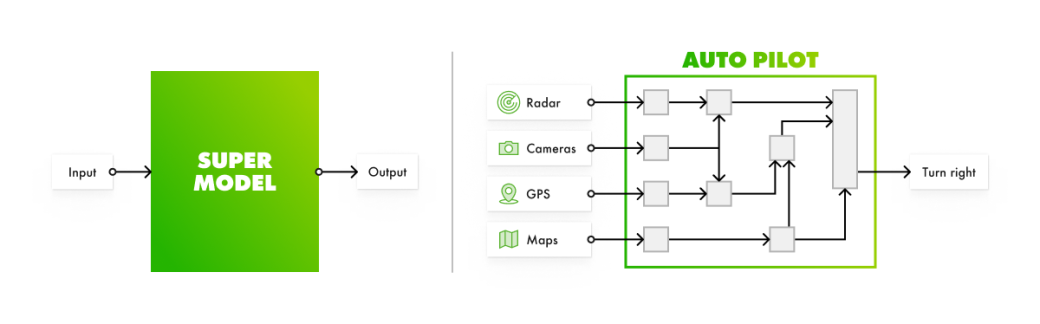

A common misconception we encounter, both from clients and internal teams, is that AI is a plug-and-play miracle. Many stakeholders just envision dumping a pile of data (or, sometimes, not data at all) into a system and expecting it to instantly automate everything, from sales to support. Unfortunately, rather than being one big, mystical solution, artificial intelligence is a pipeline of interconnected components. Some of them are powered by ML algorithms, others are not.

For instance, Leo, our email auto-response solution pulls CRM data, applies scoring rules, and follows a defined response template. To craft such a tailored workflow, start from answering these questions:

Without this clarity, you’re left with a shiny toy that frustrates more than it delivers.

Too often, companies dive into AI without a clear “why.” We’ve had clients say, “We need AI in our product,” but when we ask for more detail, they can’t pinpoint what it’s for. This “shiny-object syndrome” leads to misaligned efforts as teams burn through budgets building features no one needs, like an AI chatbot that answers questions users never ask. At Leobit, we’ve learned that AI must serve a purpose tied to a real pain point. For instance, Leo was created in response to the growing need to handle sales inquiries faster.

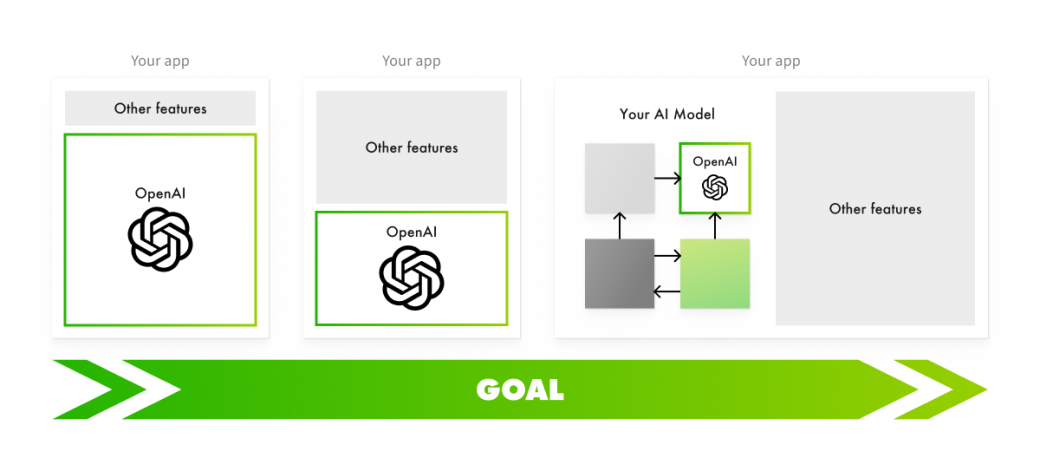

One of the simplest ways to adopt AI into the existing product is the wrapper approach. AI products and features are built as wrappers over existing models, such as ChatGPT, called by the app through the OpenAI API. Such an approach is typically easier than other AI adoption methodologies.

We at Leobit used this method while developing the initial version of Leo with ChatGPT. While the wrapper approach helped us save time and roll out a functional early version of the product in days, we realized that such simplicity comes with trade-offs that can undermine long-term success due to particular problems.

Differentiating a product in the rapidly evolving domain of AI-powered software may be challenging. AI-powered solutions built with the wrapper approach rely on simple techniques using existing models that anyone can replicate quickly. As a result, your product’s edge can evaporate quickly.

The image above highlights the difference between a basic wrapper approach and a more customized method. The first two application architectures rely on the same OpenAI model to varying degrees. In such cases, it might be challenging to gain a competitive edge because AI features replicate each other. Meanwhile the third architecture stands out by incorporating OpenAI as just one component within a broader AI system.

LLMs are designed to handle a wide range of tasks, but this versatility makes them large and complex, which increases operational costs. Suppose you provide an AI-powered summarization tool for documents. On average, users upload 10 documents per day. Each document is about 10 pages long (500 words per page) and is summarized into a single page.

If you are using GPT-4 32k models to summarize this content, this would cost you about $143.64 per user per month. This breaks down into $119.70 for input processing and $23.94 for output generation, based on rates of $0.06 per 1,000 input tokens and $0.12 per 1,000 output tokens. In most cases, you won’t need to use a model trained on the entire Internet because such a solution is typically inefficient and costly.

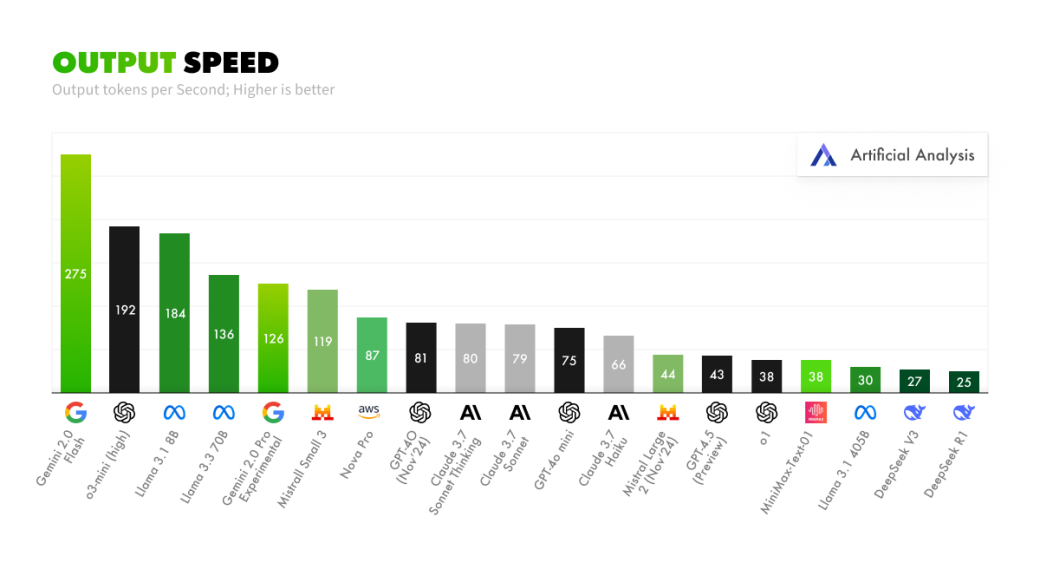

LLMs are mostly slower than regular algorithms because they require massive computational resources to process and generate text. They are also based on complex transformer-based architectural patterns that involve billions of parameters.

In some cases, slower model performance won’t cause major issues. For example, an AI-powered sales assistant can tolerate slight delays when drafting responses. However, performance becomes critical in workflow automation solutions, such as an invoice processing system, that need to receive the full output before moving to the next step.

Customizing existing LLMs is typically challenging. Fine-tuning can help, but it's often insufficient, costly, and time-consuming. It can also cause problems like performance delays, AI hallucinations, and security concerns. Deep customization requires more than tweaking prompts or adding a small dataset; it demands a rethink of the architecture.

Even with a solid goal, rolling out AI is no walk in the park. It is a multi-step process where each iteration requires careful planning. Companies often stumble because they overlook the following essential aspects:

If you skip these steps, you risk building an unreliable or misaligned system. Such a solution is likely to frustrate users and make ROI elusive.

The AI adoption challenges we describe aren’t unsolvable. With the right approach, you can turn obstacles into opportunities.

Before diving in with AI adoption, take stock of your operations:

For Leo, we first tried a manual email filter. It worked but was slow. Only then did we bring in AI to automate scoring and replies, proving the need.

AI is non-deterministic by design. You may get different outputs with identical inputs, which makes the results rather unpredictable. That’s why jumping in blind is risky. Instead, kick things off with a low-stakes PoC:

A PoC we ran for a client took one day and $300 in API costs. It revealed that GPT-4o-mini was fast but provided vague outputs. Meanwhile, Sonnet 3.7 nailed accuracy — clarity that shaped their next move.

A PoC might prove value, but wrapper solutions hit a wall as costs rise or competition intensifies. To scale, evolve your approach:

Take a foundation model and train it with your data, such as past sales emails or support tickets. It’s a middle ground that boosts relevance without starting from scratch. AI doesn’t act as an all-knowing entity. Instead, it needs to use quality data, well-defined steps, and clear objectives. Use examples to illustrate the difference between effective and ineffective AI outputs.

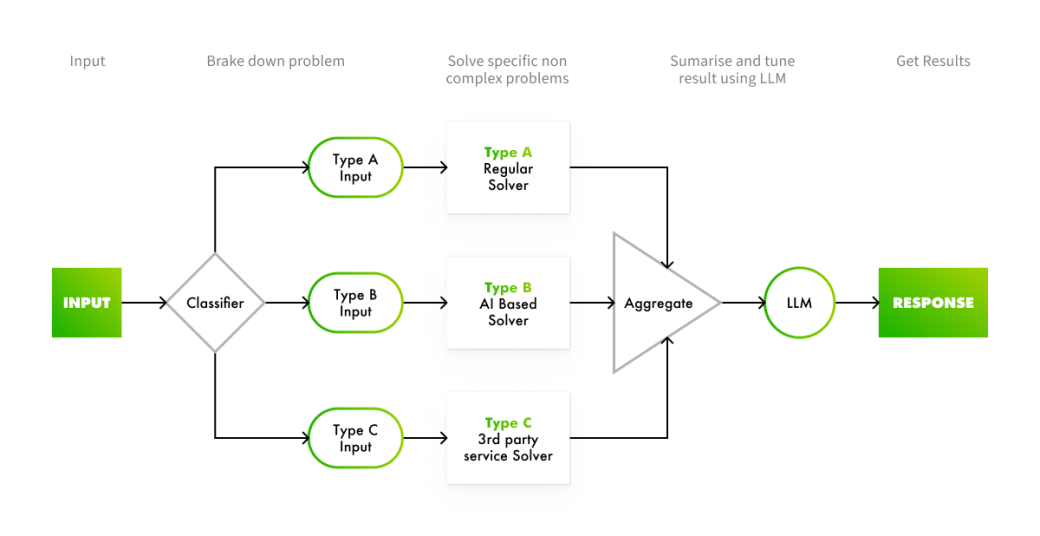

Combine small, specialized models with custom code. For document processing, one model might classify files (invoices vs. contracts), another extracts key info, and a third summarizes. Developing such a solution is faster and cheaper than building a single LLM for this purpose.

Suppose we want to build a system that can extract relevant information from documents, such as invoices, contracts, and receipts. A step-by-step breakdown of such a solution will look as follows:

The key components of the system are:

With the given modular approach, each component of the problem is handled by the most appropriate and efficient method. In addition, it brings greater efficiency in using third-party tools. Instead of handling the entire workload, they consume fewer resources and are more cost-efficient.

Leo started as a ChatGPT wrapper with a huge prompt (company details, scoring rules). It worked, but costs crept up. We shifted to a custom tool chain, cutting expenses and adding CRM integration. The result is a scalable tool providing us with a competitive edge.

Ready to act? Here’s how to kick things off:

AI adoption isn’t about chasing the latest trend. It is about solving real problems smarter, faster, and more effectively. Relying on wrapper solutions might get you in the game, but it won’t keep you there because competitors catch up, and costs spiral. Customers who expect instant magic will walk away unless you set clear goals and show how AI delivers. And without a strategy, “AI-first” is just a hollow label that investors see through.

Our framework offers a clear path forward. Start with a small win, analyze your needs, and grow deliberately. Whether it’s a quick PoC or a custom LLM like Leo, the key is clarity, iteration, and a focus on value.