Updated March 31, 2026

Strategy used to just be a meeting. You pulled reports, argued over what they meant, picked a direction, and moved on.

That’s not how it works anymore.

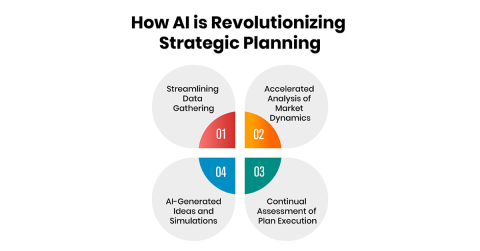

Now models are already shaping decisions before the meeting even happens. Pricing shifts because a model flagged elasticity. Product priorities shift as usage patterns change. Expansion plans get nudged by signals no one saw manually.

Looking for a Artificial Intelligence agency?

Compare our list of top Artificial Intelligence companies near you

The pace is different. You feel it quickly.

At a basic level, the models are just finding patterns. But what matters is where you point them.

You don’t need every single type of model running at once. Most teams don’t even have the data discipline for that. What matters is choosing the right approach for a real decision and sticking with it long enough to see if it changes anything.

Churn models, demand forecasts, segmentation, none of it matters unless someone is using the output to act. Otherwise, it just becomes another layer of reporting.

Let's explore how to use these models and algorithms to our maximum advantage.

Dashboards tell you what happened. But you can look at them all day and still not commit to anything.

Models push you forward. They force you to ask what happens next.

That’s where most teams stall. Not because the models are wrong, but because acting on probabilities feels uncomfortable. You’re no longer explaining the past, you’re making a bet.

In practice, the shift is subtle. Instead of arguing over last quarter’s numbers, you start testing scenarios.

Those conversations are harder. But they’re the ones that move things.

Most teams get stuck here because they’re still treating models as reporting tools instead of decision tools. The real shift happens when you start tying model output to measurable outcomes, especially when you’re tracking something as specific as AI ROI for enterprise. That’s where the conversation changes from “is this interesting?” to “is this actually moving the business?”

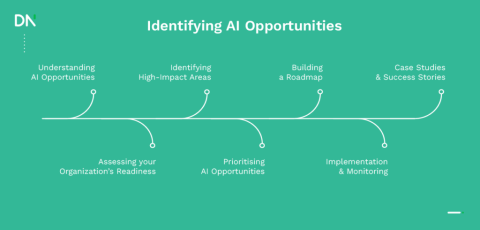

Most “unexpected” opportunities aren’t actually unexpected.

They show up quietly first in search behavior shifts, and customers start describing the same problem in slightly different ways. Or support tickets repeat patterns that don’t quite match existing categories.

You don’t notice it right away. Then suddenly it’s obvious.

All models help do is surface that earlier. Not perfectly, but earlier than anyone scanning manually.

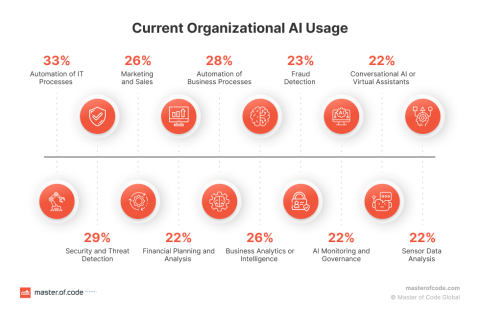

Clustering customer feedback, tracking anomalies in demand, and picking up on long-tail behavior, this is where AI starts to feel useful. It can very quickly, based on what has already happened, highlight something worth paying attention to.

One signal doesn’t matter. But a pattern does.

Adrian Iorga, Founder & President of Stairhopper Movers, runs a business where demand patterns shift quickly across neighborhoods, seasons, and even specific building types, so spotting early signals matters more than reacting late.

He says, “What looks like random demand is usually not random at all. We started noticing small clusters, certain types of moves increasing in very specific areas, or repeat requests tied to building layouts or timing. If you wait for that to show up clearly in reports, you’re already behind.

The real advantage comes from catching those patterns early and adjusting how you staff, price, or prioritize jobs before it becomes obvious to everyone else.”

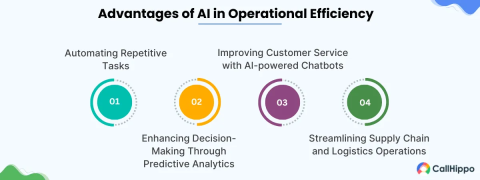

Most of the real gains come from removing friction. Here’s where AI can be put to use.

None of this is a breakthrough. But it helps you start making decisions faster. You recover from bad ones faster, too. That’s usually where the advantage shows up.

When you remove the low-value coordination that slows everything down.

Teams that pair automation with the right operational support, whether that’s internal ops or an executive assistant staffing agency, tend to move faster because someone is actually owning the execution layer, not just the insight.

So you’re not right all the time, but you’re learning quicker than everyone else.

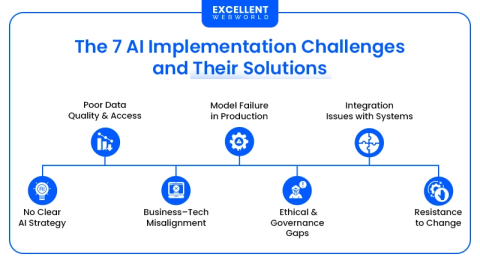

This is where things fall apart. Data is often inconsistent, and ownership isn’t always clear. Models work in a pilot and then quietly fail when scaled. People don’t trust the output, or worse, they ignore it completely.

The technical problems are usually solvable.

The behavioral ones are harder.

Conrad Wang, Managing Director of EnableU, works closely with organizations trying to operationalize new systems across teams, where adoption tends to break down long before the technology does.

He explains, “Most failures we see are not because the model doesn’t work. It’s because no one changes how decisions are actually made. Teams still rely on instinct, or they treat the output as optional.

Unless someone is accountable for using that signal in a real workflow, the model just becomes another dashboard that people ignore. The hard part isn’t building it, it’s making it part of how people operate every day.”

If no one owns the decision, the model doesn’t matter. If the data pipeline breaks every few weeks, the model doesn’t matter. If teams don’t understand what the output actually means, they won’t use it.

So you have to start smaller. Pick one decision. A real one. Something measurable.

Wire the data properly. Then keep a human in the loop where it matters. Especially when the stakes are high or the context is messy.

Governance isn’t a side task either. You need to know where the data comes from, how the model behaves over time, and what happens when it drifts.

Otherwise, you’re just guessing with better tools.

Strategy is already shifting from something periodic to something continuous.

You see it in teams that are constantly testing, adjusting, and re-evaluating instead of waiting for a quarterly reset.

The systems are getting closer to that, too. More automated feedback loops. Faster iteration cycles. Better ways to simulate outcomes before committing resources.

That doesn’t replace judgment, but raises the bar for it. The teams that benefit aren’t the ones with the most advanced models. They’re the ones who can adapt quickly when the model shows something unexpected.

That part doesn’t change.

If you’re actually trying to execute on any of this, you don’t need more theory. You need people who’ve done it before.

That’s where platforms like Clutch become useful. It’s not just a directory. You can see how different firms actually work, real client reviews, project details, how they price, where they’ve delivered, and where they haven’t.

You’ll find verified reviews and detailed profiles across hundreds of thousands of providers, which makes it easier to move from “we should do this” to actually getting it done.

Instead of guessing who might be a good fit, you can compare providers side by side and understand how they handle problems similar to yours.

That cuts down a lot of wasted cycles.

Start by narrowing down your use case, strategy, data, and implementation, and look at teams that have already solved that exact problem.