Updated April 30, 2026

Finding the right vendor used to be treated like a sourcing task.

But now, it’s one of those decisions that look small on paper and then quietly determines whether a project drags, whether internal teams lose confidence, or whether you end up redoing work you already paid for.

What changed first was marketplaces.

Looking for a Artificial Intelligence agency?

Compare our list of top Artificial Intelligence companies near you

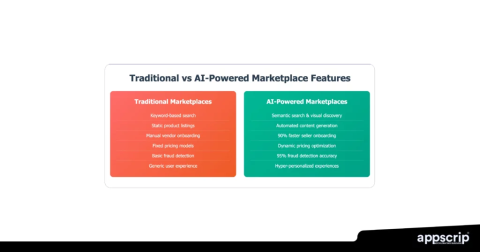

They made vendor discovery less chaotic. Now, AI is changing how that discovery actually happens, not by replacing marketplaces, but sitting on top of them and changing how buyers narrow down choices.

That’s where things get interesting.

Before AI was layered on top of this process, and before marketplaces brought structure to it, vendor selection was far less defined.

For a long time, vendor discovery was based on proximity.

You’d ask someone you trusted. Maybe check a few sites. Take a couple of calls. Then pick the one that felt most credible.

It worked just enough to stick around.

But with complex projects involving multiple systems, tighter timelines, and real budget scrutiny, that approach started to break down. Not because people were careless, but because there was no consistent way to properly compare vendors.

Everything depended on interpretation.

Platforms didn’t eliminate risk, but they did remove many blind spots.

You suddenly had:

That matters more than it sounds.

Because the real problem before wasn’t a lack of comparable signals.

Marketplaces fixed that.

They also changed how agencies present themselves. Outcomes started to matter more than positioning. If you didn’t have proof, it showed.

Even with marketplaces, the process still required significant manual work.

And most importantly, spending time filtering out vendors who were never going to be a fit.

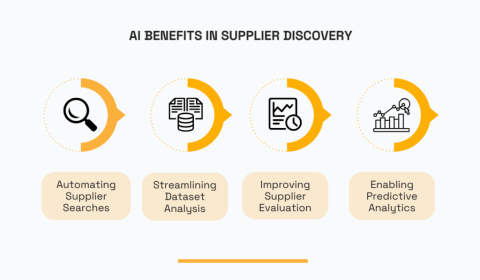

That’s the part AI is starting to remove.

Most of the noise around AI misses what it’s actually doing inside this process.

The useful part of AI here is simple.

It reads everything you don’t have time to read.

The Stanford AI Index has tracked how quickly these capabilities are evolving and where they’re already being applied.

This isn’t experimental anymore.

The value isn’t “better discovery.” It’s less wasted effort.

Instead of starting broad and narrowing slowly, you start closer to a usable shortlist.

Good systems already factor in:

Some go further and highlight likely risks based on similar past projects.

That changes how decisions get made.

Research from McKinsey & Company shows that data-driven procurement improves both speed and decision quality in supplier selection. That lines up with what actually happens when filtering improves early.

You take fewer irrelevant calls and spend more time evaluating real candidates.

That alone is a meaningful shift.

AI doesn’t fix vague thinking.

If the brief is unclear, the shortlist will be too. If the data behind vendors is weak, the recommendations won’t hold up.

You still need:

Without that, the system just gives you faster versions of the same bad options.

Ryan Hammill, Executive Director of Ancient Language Institute, works in a field where outcomes depend heavily on how clearly expectations are defined upfront, especially in educational delivery and curriculum design.

He explains, “When we evaluate partners or tools, the biggest issue is clarity. If the goals aren’t specific, whether that’s learning outcomes or delivery constraints, even a strong recommendation can miss the mark.

The same applies to AI-assisted decisions. It can narrow options quickly, but if the inputs are vague, you’re just getting a more efficient version of a misaligned choice.”

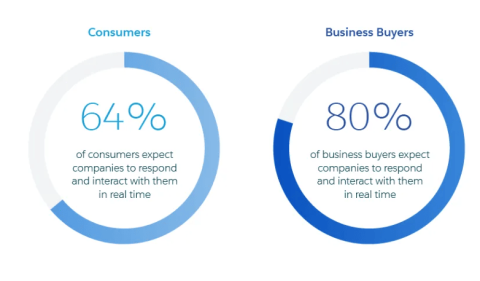

B2B buyers are starting to expect the same level of relevance they get elsewhere.

McKinsey has shown that personalization strongly influences decision-making, and that expectation has also moved into procurement tools.

Once buyers get used to results that reflect their actual context, generic listings feel inefficient.

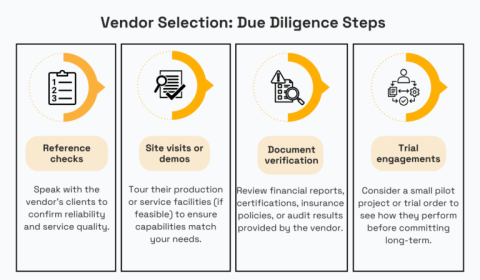

AI systems need good data. But they also need oversight.

Without it, you get:

This becomes even more serious in regulated or high-risk sectors. In areas like medical negligence, where outcomes directly affect liability and compliance, decisions can’t rely on opaque systems or incomplete data.

There needs to be a clear, defensible link between the vendor selection process and the criteria used.

Frameworks like the NIST AI Risk Management Framework exist because this is already a real issue, not a theoretical one.

Regulation is catching up, too. The EU AI Act makes it clear that transparency and accountability are becoming mandatory in enterprise AI use.

In procurement, that translates directly into trust. If you can’t explain why a vendor was recommended, you can’t defend the decision.

The biggest shift is where you start.

Not with search.

With context.

Instead of browsing, you input:

And the system translates that into a shortlist.

That’s a different entry point entirely.

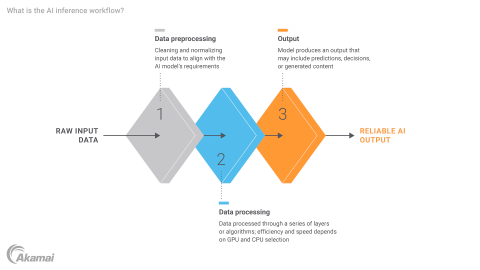

Matching is moving beyond filters.

You’ll see combinations of:

That last category used to be invisible. Now it’s being surfaced.

Those are harder to quantify, but they’re often the deciding factor. If platforms can start surfacing those signals more clearly, the shortlisting process becomes much more reliable.”

Which is useful, because most project issues don’t come from capability gaps. They come from misalignment.

This becomes clearer in categories where execution details matter more than surface-level positioning.

For example, in apparel production, teams aren’t just comparing “custom printing” providers anymore. They’re looking at how vendors handle repeat orders, bulk consistency, and turnaround under pressure, more closely aligned with how suppliers are evaluated for blank or wholesale t-shirts rather than one-off designs.

Christopher Skoropada, CEO of Appsvio, works closely with teams building and scaling software products, where vendor fit often comes down to how well teams operate together over time.

He says, “Technical capability is usually the baseline. Most vendors you shortlist can do the work. Where things succeed or fail is in how teams communicate, how quickly they adapt when requirements shift, and how they handle ambiguity.

A team shares a project brief.

The platform:

No one is handing final decisions to a system.

What works is:

Every time teams try to skip that second step, they regret it.

A fair comparison comes down to how each approach handles volume, speed, and decision quality.

AI wins here. It processes more data faster and improves as it learns.

That’s not debatable.

Accuracy depends on data quality.

When marketplaces provide:

AI performs well. Without that, it doesn’t.

That’s why marketplaces and AI are complementary, not competitive.

This is where things get sensitive. People need to trust the process.

If recommendations feel opaque or unchallengeable, adoption drops, even if results are technically better.

Transparency matters more than raw performance.

This one is mixed.

AI reduces time spent searching and screening.

But it introduces:

Deloitte has highlighted that procurement value comes when process and technology mature together. In practice, that means AI only delivers real impact when teams have clear workflows, structured data, and consistent evaluation criteria in place.

Tools aside, the real question is what actually changes for the teams making these decisions.

The teams that get the most out of AI-assisted discovery define outcomes clearly, make constraints explicit, and structure their internal data.

Most teams underestimate how fragmented that data actually is. Project scope, vendor commitments, and delivery expectations often live across emails, docs, and internal tools.

That fragmentation only becomes visible when something goes wrong, when timelines slip, scope gets disputed, or no one can trace what was actually agreed.

Using a centralized system like contract management software helps tie those decisions back to actual agreements, which becomes critical when you’re evaluating performance or tracing where something went off track.

It also makes the evaluation process less subjective. You’re not relying on memory or scattered context; you’re working from what was formally defined.

Then they test the system in a low-risk category before expanding. Not to prove the tool works, but to see where their own process breaks under pressure.

Most agencies assume the challenge is winning the deal. Increasingly, it’s getting shortlisted in the first place.

Before, strong positioning could carry you further. A polished site, a confident pitch, a few recognizable logos were often enough to get you into consideration.

Now they’re no longer just presenting themselves to people. Systems are evaluating them instead.

That changes what gets picked up.

Systems are looking for:

If your work can’t be clearly tied to results or context, it becomes harder for both systems and buyers to understand where you actually fit.

On the buyer side, the shift isn’t just about using better tools. It’s about knowing what to trust inside them.

A few signals matter more than anything else:

That last one matters more than it seems. If you can’t question the output, you stop trusting it. And once that happens, the tool becomes something you work around rather than rely on.

If not, you’re just moving faster without improving decisions.

Vendor discovery has already shifted from instinct to structure.

Now it’s moving toward assisted decision-making.

AI speeds up the process and makes it more informed. Marketplaces provide the data and context that make those systems useful.

But the goal hasn’t changed. You’re still trying to find a partner who can deliver.

The tools just make it harder to hide who actually can.

If you want a more structured way to evaluate vendors, platforms like Clutch can help you move from a broad search to a shortlist you can actually work with, based on verified reviews, real project data, and clear comparisons.

Author Bio: Brooke Webber is a passionate advocate for a people-first strategy in HR. Her major focus areas are workplace psychology and employee listening, where she has already accumulated five years of writing experience.